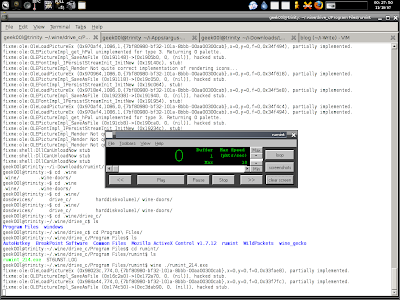

When performing network traffic sniffing, capturing or inspection, we all usually use the sniffer calls tcpdump(to me sniffer is not the correct term but lets ignore it here), Sun has developed their own sniffer which is called snoop. I think snoop is useful for people who run SunOS based servers when coming to network traffic debugging. Anyway I'm trying all these on

nexenta OS that I have came across lately and hopefully this blog post is useful to myself if I need to perform reactive Network Security Monitoring Operation on SunOS in future.

Before I have done anything using snoop, I check out the man page -

shell>man snoop

If you can't find certain man page for the command you want to use, you can try this too -

shell>info snoopSnoop also has primitive support for filter expression, it is pretty similar to bpf filtering while I don't really look into it much. Just like tcpdump -d, snoop has -C to print the code generated from the filter expression for either the kernel packet filter, or snoop's own filter. For example -

shell>sudo snoop -C ipKernel Filter:

0: PUSHWORD 6 1: PUSHLIT EQ

2: 129 (0x0081) 3: BRFL 4: 3 (0x0003)

5: LOAD_OFFSET

6: 2 (0x0002)

7: POP 8: PUSHWORD 6 9: PUSHLIT EQ 10: 8 (0x0008)I don't really dig into it much to understand the code like I did for tcpdump -d

here.

By default snoop will capture the whole packet unless you specify the snap length with -s(same like tcpdump), there's very good tip in using -s option which I would like to show here as it can be useful for tcpdump user too -

Truncate each packet after snaplen bytes. Usually the whole packet

is captured. This option is useful if only certain packet header

information is required. The packet truncation is done within the

kernel giving better utilization of the streams packet buffer. This

means less chance of dropped packets due to buffer overflow during

periods of high traffic. It also saves disk space when capturing

large traces to a capture file. To capture only IP headers (no

options) use a snaplen of 34. For UDP use 42, and for TCP use 54.

You can capture RPC headers with a snaplen of 80 bytes. NFS headers

can be captured in 120 bytes.

That's really neat -

- Ethernet Header(14)+IP Header without option enabled(20) =

34- Ethernet Header(14)+IP Header without option enabled(20)+UDP Header(8) =

42- Ethernet Header(14)+IP Header without option enabled(20)+TCP Header without option enabled(20)=

54To make sure we are capturing the IP header without option enabled, we can also make use of the filter such as -

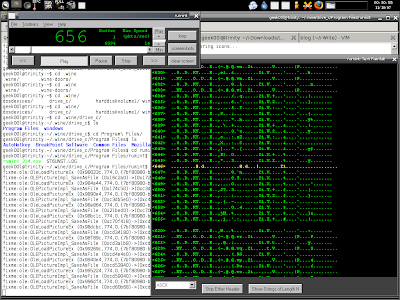

ip[0] & 0x0F = 5Netstat -i output tells me I can log via my network interface ae0, here's what I do with snoop to log the network packets to file -

shell>sudo snoop -q -r -d ae0 -o testing.snpBy default it will print the packet count that been seen by your network interface so with -q as quiet mode it won't, you can also specify -D in case you want to monitor the count of packet dropped during capture period. This is extremely useful to make sure you don't miss any packet. The -r option just like -n in tcpdump to avoid address resolution. While the -o option is to output it to a file which is just like -w in tcpdump.

After logged to the file, I check the file format -

shell>file testing.snptesting.snp: Snoop capture file - version 2 (Ethernet)

You can read it with -

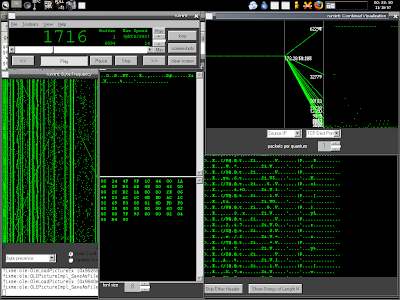

shell>snoop -t a -r -i testing.snp1 0.00000 172.16.47.133 -> 172.16.47.2 DNS C _nfsv4idmapdomain.localdomain. Internet TXT ?

2 0.06758 172.16.47.2 -> 172.16.47.133 DNS R Error: 3(Name Error)

3 0.00047 172.16.47.133 -> 172.16.47.2 DNS C _nfsv4idmapdomain. Internet TXT ?

I like -t a which prints the absolute time that is similar to tcpdump -tttt. The -i option is just like -r option in tcpdump in order to read the packet dump. You may notice the number of each packet that shown in the snoop output too, and you can jump to certain packet with -p option. For example -

shell>snoop -t a -r -p 2 -i testing.snp

2 11:44:3.12067 172.16.47.2 -> 172.16.47.133 DNS R Error: 3(Name Error)

Or you can specify the range such as to jump to the packets within 10-20 range, just specify -p 10,20 will do.

You can also print summary line with -V option which summarizing the packet in human readable output -

shell>sudo snoop -t a -d ae0 -V Using device ae0 (promiscuous mode)

________________________________

10:44:44.86678 nexenta -> 192.168.1.124 ETHER Type=0800 (IP), size=98 bytes10:44:44.86678 nexenta -> 192.168.1.124 IP D=192.168.1.124 S=172.16.47.133 LEN=84, ID=24675, TOS=0x0, TTL=255

10:44:44.86678 nexenta -> 192.168.1.124 ICMP Echo request (ID: 8040 Sequence number: 0)

________________________________

10:44:44.86682 192.168.1.124 -> nexenta ETHER Type=0800 (IP), size=98 bytes10:44:44.86682 192.168.1.124 -> nexenta IP D=172.16.47.133 S=192.168.1.124 LEN=84, ID=11586, TOS=0x0, TTL=128

10:44:44.86682 192.168.1.124 -> nexenta ICMP Echo reply (ID: 8040 Sequence number: 0)

If you want the packet to be printed in side by side hexadecimal and ascii output which is like -XX in tcpdump, you just need to specify -x 0 in snoop. Here's the example command you can use -

shell>snoop -x 0 -t a -r -i testing.snp

15 11:01:37.34663 172.16.47.133 -> 172.16.47.2 ICMP Destination unreachable (UDP port 34901 unreachable)

0: 0050 56f8 6c66 000c 2999 4f2b 0800 4500 .PV.lf..).O+..E.

16: 0070 8e9d 4000 ff01 3647 ac10 2f85 ac10 .p..@...6G../...

32: 2f02 0303 0c7b 0000 0000 4500 00af 2f13 /....{....E.../.

48: 0000 8011 5483 ac10 2f02 ac10 2f85 0035 ....T.../.../..5

64: 8855 009b 69cb fb53 8180 0001 0001 0002 .U..i..S........

80: 0002 0231 3202 3432 0237 3503 3230 3207 ...12.42.75.202.

96: 696e 2d61 6464 7204 6172 7061 0000 0c00 in-addr.arpa....

112: 01c0 0c00 0c00 0100 000b ff00 1807 ..............

If you want the output looks like the tshark which prints each protocol header in details, you can use -v, the example output for single packet is shown below -

shell>snoop -v -t a -r -i testing.snpETHER: ----- Ether Header -----

ETHER:

ETHER: Packet 1 arrived at 11:01:25.14378

ETHER: Packet size = 98 bytes

ETHER: Destination = 0:50:56:f8:6c:66,

ETHER: Source = 0:c:29:99:4f:2b,

ETHER: Ethertype = 0800 (IP)

ETHER:

IP: ----- IP Header -----

IP:

IP: Version = 4

IP: Header length = 20 bytes

IP: Type of service = 0x00

IP: xxx. .... = 0 (precedence)

IP: ...0 .... = normal delay

IP: .... 0... = normal throughput

IP: .... .0.. = normal reliability

IP: .... ..0. = not ECN capable transport

IP: .... ...0 = no ECN congestion experienced

IP: Total length = 84 bytes

IP: Identification = 36451

IP: Flags = 0x0

IP: .0.. .... = may fragment

IP: ..0. .... = last fragment

IP: Fragment offset = 0 bytes

IP: Time to live = 255 seconds/hops

IP: Protocol = 1 (ICMP)

IP: Header checksum = 5d58

IP: Source address = 172.16.47.133, 172.16.47.133

IP: Destination address = 202.75.42.12, 202.75.42.12

IP: No options

IP:

ICMP: ----- ICMP Header -----

ICMP:

ICMP: Type = 8 (Echo request)

ICMP: Code = 0 (ID: 8122 Sequence number: 0)

ICMP: Checksum = 3bf2

ICMP:

I think that's all for the snoopy dog, in fact this post is more about tcpdump vs snoop but I think both are great so no fight between them. If any of you have better knowledge in using snoop, please do share as I still considered myself as newbie in utilizing it practically.

Enjoy (;])